The AI Cycle Is Shifting From Training to Inference

The market narrative around Nvidia has largely centered on the explosive demand for training large language models. Training clusters built the first wave of AI infrastructure. But the more important shift emerging now is that AI demand is beginning to rotate from training capacity to inference capacity.

That distinction matters.

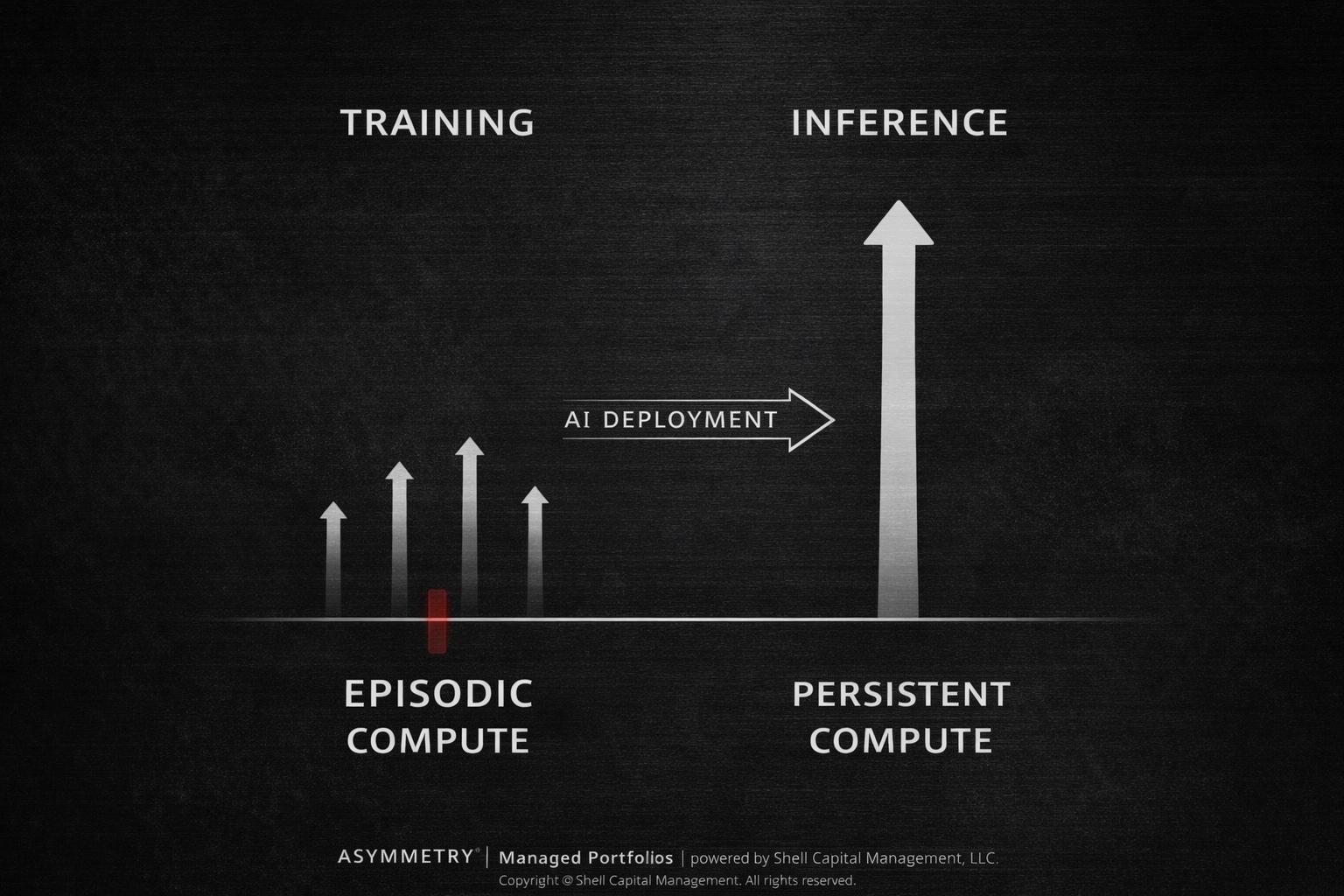

Training a model is episodic. It happens in bursts—build the model, update it, retrain it. But inference is persistent. Once a model exists, it must run continuously to serve queries, power applications, and increasingly operate autonomous systems.

In other words, training creates intelligence. Inference deploys it.

And deployment scales.

Goldman Sachs highlighted this dynamic following Nvidia’s 2026 GTC keynote, noting that Nvidia disclosed over $1 trillion of datacenter revenue visibility through 2027 across its Blackwell and Rubin platforms. Just a year earlier, the company had disclosed roughly $500 billion through 2026. The expansion in forward demand suggests that hyperscaler AI infrastructure spending trends remain intact and potentially extend longer than many feared.

But the more structural development was Nvidia’s emphasis on inference infrastructure.

The company introduced a new Groq LPX rack architecture designed specifically for inference workloads, claiming dramatically higher throughput per watt and materially greater revenue opportunity for trillion-parameter models compared with the Blackwell training platform.

This signals a transition in the AI compute stack.

The first phase of the AI buildout required enormous clusters to train frontier models. The next phase requires infrastructure capable of running those models constantly across enterprises, software platforms, and autonomous systems.

If training clusters built the intelligence layer, inference clusters build the operational layer.

That operational layer could ultimately require far more compute.

A single training run may consume a large burst of GPUs for weeks or months. But inference must operate every second of every day across millions of users, applications, and agents. As AI systems proliferate, inference demand scales with usage rather than development.

Nvidia also emphasized this shift through the introduction of agentic AI infrastructure, including the NemoClaw platform designed to support autonomous AI agents operating continuously within enterprise systems.

Agentic systems represent a different type of workload. Instead of responding to occasional prompts, agents monitor environments, execute tasks, interact with software, and make decisions around the clock.

That architecture naturally multiplies inference demand.

The implication is straightforward.

The AI infrastructure cycle may be less about a single burst of model training and more about the long-duration deployment of AI across the global economy.

For investors, the key question isn’t whether AI models can be trained. That milestone has already been achieved. The question is whether AI systems become embedded into everyday processes—enterprise software, autonomous workflows, decision systems, and real-time applications.

If that adoption curve accelerates, inference becomes the dominant driver of AI compute demand.

And inference infrastructure behaves differently than training infrastructure.

Training demand can spike and normalize. Inference demand scales with usage.

This is why Nvidia is increasingly positioning itself not simply as a semiconductor company but as an AI datacenter architecture provider.

The company’s roadmap now spans GPUs, networking fabrics, optical switching, rack-level systems, and software platforms. Nvidia’s Rubin architecture is designed to scale clusters to hundreds of GPUs per node, while its Spectrum networking products integrate compute and networking into a unified AI infrastructure stack.

That vertical integration deepens switching costs and expands Nvidia’s share of AI datacenter spending.

Instead of selling chips, Nvidia increasingly sells entire AI factories.

For businesses deploying AI at scale, the value proposition shifts from individual components to system-level performance: throughput, power efficiency, networking latency, and integrated software stacks.

In other words, the competitive battlefield is moving from silicon to system architecture.

From an asymmetry perspective, the market’s focus on training demand may underestimate the structural scale of inference demand if AI adoption broadens.

Training built the models.

Inference determines whether those models become infrastructure.

If AI evolves into a persistent computational layer embedded across industries, the demand for inference compute could exceed the initial training buildout.

That doesn’t eliminate risks. Goldman Sachs highlighted several that could interrupt the cycle: a slowdown in hyperscaler infrastructure spending, increasing competition, margin pressure, or supply constraints.

But the larger structural shift remains.

The AI cycle is moving from building intelligence to deploying it.

And deployment tends to be where scale emerges.

The key takeaway from Nvidia’s GTC announcements isn’t just stronger datacenter demand visibility. It’s the recognition that AI infrastructure is evolving from episodic model training toward persistent inference workloads powering applications and autonomous systems.

If AI becomes embedded across enterprise software, digital services, and autonomous workflows, inference infrastructure may ultimately become the largest component of the AI compute stack.

That transition is where the next phase of the AI cycle—and potentially the largest source of demand—may emerge.

Mike Shell is the founder and chief investment officer of Shell Capital Management, LLC, a registered investment adviser. He serves as portfolio manager of ASYMMETRY® Managed Portfolios, a separately managed account program with trade execution and custody provided by Goldman Sachs Custody Solutions.

ASYMMETRY® Observations are provided for general informational and educational purposes only. They do not constitute investment advice, a recommendation, or an offer to buy or sell any security or investment strategy. The content is not intended to be a complete description of Shell Capital’s investment process and should not be relied upon as the sole basis for any investment decision.

Any securities, charts, indicators, formulas, or examples referenced are illustrative and are not intended to represent actual client portfolios, recommendations, or trading activity. Past performance is not indicative of future results. All investing involves risk, including the possible loss of principal.

Opinions expressed reflect the judgment of the author at the time of publication and are subject to change without notice as market conditions evolve. Information is believed to be reliable but is not guaranteed, and readers are encouraged to independently verify any information before making investment decisions.

Shell Capital Management, LLC provides investment advisory services only to clients pursuant to a written investment management agreement and only in jurisdictions where the firm is properly registered or exempt from registration.